![]() Joginder Anand, a longstanding reader of the BMJ, wants to know how we can encourage authors to respond. In a recent email he asks: “Should the BMJ not make it mandatory for the leading authors of all articles to respond to criticisms or requests for clarifications?

Joginder Anand, a longstanding reader of the BMJ, wants to know how we can encourage authors to respond. In a recent email he asks: “Should the BMJ not make it mandatory for the leading authors of all articles to respond to criticisms or requests for clarifications?

My question back to him is how? What would be the penalty?

Dr Anand suggests banning further publication in the journal.

Would that work? I don’t think so. Many of our authors are busy clinicians or researchers. Often they intend to respond, but finding the time to do so is a challenge. We are delighted when they do, but acknowledge it isn’t always feasible.

All corresponding authors get alerted when a response is posted to an article they have written, and are encouraged to respond. We don’t specify a time limit before posting. Many choose to wait for days or even weeks, or perhaps until their article has generated a few responses, before addressing the points raised in a single response back. Occasionally we contact authors and ask them to respond.

There are other ways of engaging authors. All articles include the email addresses of the corresponding authors. Readers often contact them directly if they have questions or comments. Also, many authors are active on Twitter and Facebook, and use these channels to field feedback when they have written an article.

But Dr Anand makes an important point, and I discussed his email at the recent BMJ hack day two weeks ago when I outlined some of the challenges facing the scholarly publishing industry to the web developers who took part.

I reminded them that post publication discussion is seldom confined to the actual article these days, and asked for their views on how a journal can easily capture all the “noise” an article generates—an at-a-glance dashboard that pulls in metrics, citations, page ranks, social media, press coverage.

Or how can the discussion drill down into the very article itself, generating discussion threads around particular sentences, paragraphs, or graphs? How can such feedback be sorted so, for example, a cardiologist can focus on the feedback provided by other cardiologists.

This was the challenge. And of the 13 hack presentations on the Sunday, four addressed the need to revolutionise scholarly publishing.

One tool, the Evolving Journal hack, stands out. Devised by Jonathan Asiedu, Jeremy Chui, Ben Heubi, James Lethem, and Harry Tanner, their platform “adds value over time by considering the inputs, outputs, questions, and criticism by the medical community of multidisciplinary professionals after the peer review process.

“Effectively, we enrich the journal by providing further context to the document through conservation, semantic linking, and open data access,” they claim.

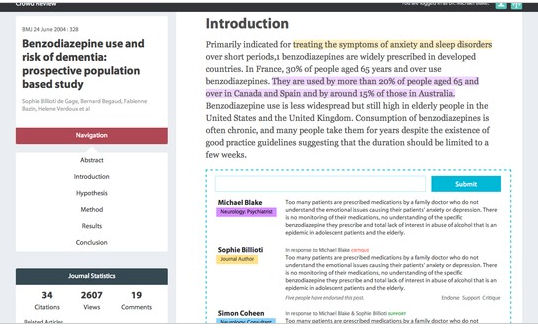

The team took a BMJ paper published in 2004 about benzodiazepine use and risk of dementia. As you can see from the image above, it lists views, comments, and citations on the left, along with a clear way of navigating the paper. The article is on the right, using colour-coded links to cluster different professional groups.

The team’s accompanying YouTube video pitch explains more.

The project doesn’t directly address Dr Anand’s concerns, but it makes it easier for both authors and readers to easily assess a paper’s impact at both a “macro” level (citations and page views), and at a “micro” level (paragraph three of the conclusions seems to have generated a great deal of debate, for example).

And if an author’s “badge” was colour-coded as well as his or her professional background, readers could more easily see how often he or she had engaged with an article’s community of readers.

David Payne is editor, bmj.com and readers’ editor.