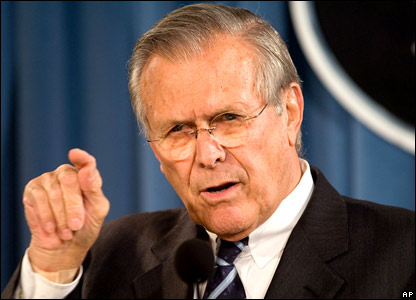

Along with Rumsfelt, the drug-addled Dr. House and everyone who’s ever sat an exam, we can all recollect times when we know that we don’t know something.

Along with Rumsfelt, the drug-addled Dr. House and everyone who’s ever sat an exam, we can all recollect times when we know that we don’t know something.

And we have times when we know something.

And we have times when we learn about something we didn’t know we were unaware of. (Varenicline anyone?)

And we also have times when we think we know something, but aren’t quite sure. I think these are the most common ones. These are the times when it would be nice to be able to describe and understand the limit of our certainty. This is the concept of the ‘95% confidence interval’ (CI).

The confidence interval is the interpreted as the range within which the “true effect” of treatment (or prognostic estimate, or whatever) may lie. In most cases, we use a 95% confidence interval – that is to say that the “true effect” has a 2.5% chance of being lower, and a 2.5% chance of being higher. They don’t have to be 95% – but usually are – so if we have a 90% confidence interval, then it’s 5% chance of being lower or a 5% chance of being higher.

The value of the confidence interval, as opposed to the p-value, is in the estimate of effect. Take the fictitious Drug A vs Drug B, with RR = 2, 95% CI = 1.01 to 4.0, and p = 0.049 . The p-value tells you ‘drug A is about 1 in 20 likely to be better than drug B’, but the CI describes ‘drug A probably doubles the chances of success, might be as bad as just a bit better, might be as good as 4 times the success rate’.

It’s relatively easy to find CI now in most major journal articles, but if they don’t exist for the study you’re looking at, there are some good online tools that can calculate them for you.

Confidence can be gauged, and this much more clinically useful than telling us the chance of something being nothing.

Acknowledgment: Excellent blog article on related topic here