Lots of debates could be had off this title. When is an ‘audit’ and audit and when is it a cloaked piece of poor quality retrospective research? Why is ‘research’ considered better just because it’s ‘special’? What makes research study data forms nearly impossible to understand without spending 3 days in a steam hut wearing just a loincloth made of old patient information leaflets and drinking far too much Red Tea?

Lots of debates could be had off this title. When is an ‘audit’ and audit and when is it a cloaked piece of poor quality retrospective research? Why is ‘research’ considered better just because it’s ‘special’? What makes research study data forms nearly impossible to understand without spending 3 days in a steam hut wearing just a loincloth made of old patient information leaflets and drinking far too much Red Tea?

What I think it’s worth taking up, for a just a bit though, is ” What is routinely collected hospital data and it’s relationship with the real world? ”

There’s a paper in the Archives that looks at bone and joint infections in a geographically defined region of France, examining the nature and epidemiology of the conditions under study. They undertook specific, prospective data collection to try to get every bone or joint infection, but they also used routinely collected data – the discharge coding from the institutions – to look to see if further cases were identifiable.

Now – this isn’t the point of the paper, which is all clever epidemiology and stuff – but it’s fascinating to look at what the data sources showed.

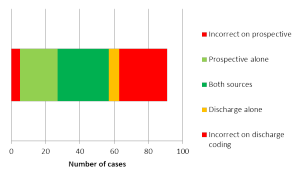

The prospective data collection, on expert independent review, felt 5/57 cases were not bone/joint infections as strictly defined. That’s 8% incorrect diagnosis in the study setting.

On the discharge coding though, a whopping 47% of bone/joint infections were incorrect.

(They did pick up an extra 6 – 10% of their cases – through this route though, so some slipping must have occurred.)

The idea of doing a prospective study is great. The confirmatory aspect of looking for these, admittedly rare, diagnosis by having a second ‘net’ to catch them in, is excellent.

The worrying bit about this is that it makes me question, quite significantly, how much we can believe reports based around code diagnosis without some serious degree of data quality checking. What if 50% of the ‘cases’ don’t actually fulfill the diagnostic criteria at all? Shouldn’t the whole report be flung firmly into the rubbish bin?

– Archi

EDIT – Another paper – looking at routine vs research height & weight data – shows a closer agreement than this one (but still not quite ideal…)